The ideas which are here expressed so laboriously are extremely simple and should be obvious. The difficulty lies, not in the new ideas, but in escaping from the old ones – Keynes.

This note describes some mathematical techniques for describing the operation and architecture of computer programs and devices. One novel aspect is the use of primitive recursion to define large scale state machines and state machine products. The basic idea is to treat state machines as maps from finite sequences of events to output values. If \(E\) is a set of discrete events that can change system state and \(X\) is a set of output values, a “state variable” is a function mapping finite sequences over \(E\), to elements of \(X\). Each finite sequence of events describes the sequence of events that has driven a system to its current state from the initial state. The next section shows how to define and compose these state variables, provides some simple examples, and explains briefly how composing those variables corresponds to different automata products. The following section shows the equivalence between these state variables and state machines. The fourth section goes over two motivating examples, an example from digital circuits and another one from networks and distributed consensus. The final section contains a short discussion contrasting this work with “formal methods” such as temporal logics.

State machines as recursive functions

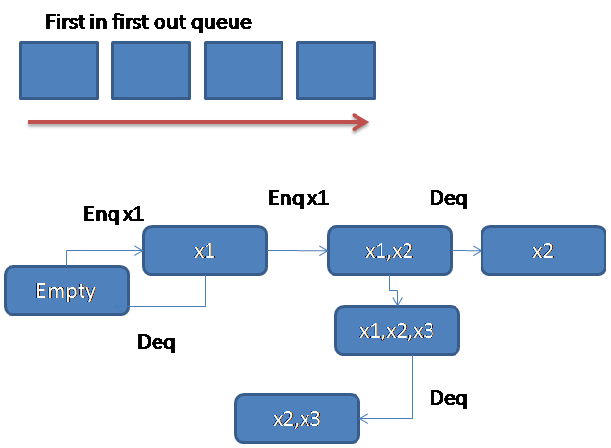

Let \(E\) be a finite set of “events” that can cause a deterministic discrete state system to change state and \(E^*\) the set of finite sequences over \(E\). In this note, a “state variable” is a function from some \(E^*\) to some set of outputs \(X\). If \(y\) is a state variable and \(s\) is a finite sequence of events, \(y(s)\) is the output of of the system described by \(y\) in the state determined by \(s\). Let nulls be the empty sequence so \(y(nulls)\) is the output in the initial state. Let \(s\mbox{ after }e\) be the sequence obtained by appending \(e\) to \(s\) on the right. For a set of outputs \(X\), a map \(h: X\times E\to X\) and constant \(c\), \[y(\mbox{nulls})=c, \quad y(s\mbox{ after }e) = h(y(s),e)\] defines \(y\) in every state. The state variable \(C\) defined by \[C(nulls)=0; C(s \mbox{ after } e) = C(s)+1 \bmod k\] counts the number of events mod k since the start state. These state functions are, as shown below, representations of state machines.

Let \(E\) be a finite set of “events” that can cause a deterministic discrete state system to change state and \(E^*\) the set of finite sequences over \(E\). In this note, a “state variable” is a function from some \(E^*\) to some set of outputs \(X\). If \(y\) is a state variable and \(s\) is a finite sequence of events, \(y(s)\) is the output of of the system described by \(y\) in the state determined by \(s\). Let nulls be the empty sequence so \(y(nulls)\) is the output in the initial state. Let \(s\mbox{ after }e\) be the sequence obtained by appending \(e\) to \(s\) on the right. For a set of outputs \(X\), a map \(h: X\times E\to X\) and constant \(c\), \[y(\mbox{nulls})=c, \quad y(s\mbox{ after }e) = h(y(s),e)\] defines \(y\) in every state. The state variable \(C\) defined by \[C(nulls)=0; C(s \mbox{ after } e) = C(s)+1 \bmod k\] counts the number of events mod k since the start state. These state functions are, as shown below, representations of state machines.

Simple composition, e.g. \(x(s) = h(y_1(s), y_2(s))\) corresponds to the “direct (cross) product” of state machines. Recursive composition permits arbitrary connection of components. For example, “\(x(\mbox{nulls}) = c\)” and “\(x(s \mbox{ after } e) = h(y(s), x(s), e)\) defines x to be constructed by connecting the output of y to the input of a new state variable and corresponds to the cascade product of state machines (where information flows only in one direction). Simultaneous primitive recursion can describe a more general composition and communication with feedback: such as processes or agents connected via message passing or shared memory, or logical connections of digital circuits. As an example of cascade composition, take the mod k counter C defined above and construct a \(\bmod k^2\) counter as follows:

\[ C_2(nulls) = 0\] \[ C_2(s\mbox{ after }e) = \begin{cases}C_2(s)+1 \bmod k&\mbox{if }C(s) = k-1\\ C_2(s)& \mbox{otherwise}\end{cases}\]

The same method can be made more modular, constructing new state variables only from previously defined state variables (which may not all have the same event sets) by using state variables as “transducers”. Example, \(u(s)\) can be defined to append a new event only every k state changes.

(which may not all have the same event sets) by using state variables as “transducers”. Example, \(u(s)\) can be defined to append a new event only every k state changes.

\[u(nulls) = nulls\] \[u(s\mbox{ after }e) = \begin{cases} u(s)\mbox{ after }e & \mbox{if } C(s) = k-1\\ u(s)&\mbox{otherwise}\end{cases}\] \[C_3(s) = C(u(s))\]

We can show that \(C_3(s) = C_2(s)\) by induction on s.

More generally, suppose \(y(s)\) is defined over event set \(E_1\) and \(x(s)\) is defined over event set \(E_2\). Define a state variable \(u\) to produce a sequence over \(E_2\) as follows:

\[u(nulls)= nulls\] \[u(s\mbox{ after }e) = u(s)\mbox{ after }h(y(s),e) ) \mbox{ where }h(y,e) \in E_2\]

Then let \(x'(s) = x(u(s))\). When it is clear from context that u depends on s, this becomes x’ = x(u). The output of the first state component controls the input of the second one – control and information cascades from the first to the second state variable.

Simultaneous recursion generalizes the composition to allow arbitrary communication. Suppose each \(y_i\) is defined on sequences over a set \(E_i, \mbox{ for }i=1,… n\) and then:

\(u_i(nulls)= nulls\) and \[u_i(s\mbox{ after }e) = u_i(s)\mbox{ after } h_i(y_1(u_1(s)),… y_n(u_n(s)),e) )\mbox{ where }h_i(…. )\mbox{ is in }E_i\]

Where components change state at different rates, we can concatenate a sequence instead of appending one event. It is important however that u(s) be a prefix of u(s after e) in order to make the product well defined (and so the past cannot change). The transducer requirement for a well defined product is that \(u(nulls)= nulls\) and \(u(s) \mbox{ is a prefix of } u(s\mbox{ after } e)\)

Connection to classical automata

A classical Moore machine can be given as

\[M = (δ, start, λ)\]

where \(δ: S× E→ S\) is the transition map for states \(S\) and events \(E\), \( λ:S→ X\) is the output map for output set \(X\), with the initial state \(start ∈ S\). Moore machines are defined to be finite state, but the slightly unorthodox Moore machines used here can have unbounded state sets – I’ll still call them Moore machines.

Let \(E^*\) be the set of finite sequences over E with \(\mbox{nulls} ∈ E^*\) the empty sequence. The map \(SF[M]: E^* → X\) can be constructed from M in two steps. First extend δ to a map \(δ’:E^* → S\) by \(δ’ nulls = start\) and \(δ’ (s\mbox{ after }e) = δ(δ'(s),e)\). Then \(SF[M](s) = λ(δ'(s))\). So every Moore machine M is essentially a receipe for a primitive recursive function \(SF[M]:E^*\to X\).

The reverse construction depends on the Myhill equivalence. Suppose we have \(f: E^* → X\). Let \(v \sim_f s\) if and only if \(f(s\mbox{ concat }z) = f(v \mbox{ concat }z)\) for all \(z \in E^*\). Then let \(\{s\}_f =\{ z: z\in E^*\mbox{ and }s\sim_f z)\). Each \(\{s\}_f\) is a state in a state machine to be constructed from \(f\). Let \(δ_f(\{s\}_f,e)= \{s\mbox{ after }e\}_f\) and \(λ_f(\{s\}_f) = f(s)\). Thus, it’s possible to construct a Moore machine \(MM[f] = (δ_f,\{\mbox{nulls}\}_f λ_f)\) from \(f\). It’s easy to show that\( SF[MM[f] ] = f\). Distinct Moore machines may be the recipe for the same state variable, so for distinct \(M\) and \(M’\) it is possible that \(SF[M] = SF[M’]\) but the difference in the state machines must be limited to naming of state elements and minimization of state (one or both may contain extra or even unreachable states). That difference may be important in some contexts such as building state tables or circuit generation, but is artifactual here.

Say \(f:E^* → X\) is finite state if and only if \(MM[f]\) is finite state (that is, if there are a finite number of equivalence classes). If both E and the image of \(h\) are finite, then if \(f\) is defined by \[f (\mbox{nulls}) = c, \quad f(s\mbox{ after }e) = h(f(s),e)\] then \(f\) must be finite state.

Say \(f:E^* → X\) is finite state if and only if \(MM[f]\) is finite state (that is, if there are a finite number of equivalence classes). If both E and the image of \(h\) are finite, then if \(f\) is defined by \[f (\mbox{nulls}) = c, \quad f(s\mbox{ after }e) = h(f(s),e)\] then \(f\) must be finite state.

The general product of state machines is given from a collection of “factor” Moore machines \(M_1,\dots M_n\) and a collection \(\phi_1,\dots \phi_n\) of “feedback maps”. The construction starts by setting the state set S to be the cross product of the state sets of the factors and the output map \(\lambda((s_1,\dots,s_n) = (\lambda_1(s_1)\dots, \lambda_n(s_n))\)to be the application of the output maps of the factor machines. The interesting part is \(\delta(s=(s_1\dots,s_n),e) = (s_1’\dots,s_n’)\) where \(s_i’ = \delta_i(s_i,\phi_i(e,\lambda(s))\). If each \(\phi_i\) depends only on e and \(\lambda_j(s_j)\) for \(j < i\) then the product is a cascade product. If each \(\phi_i\) depends only e, the product is a cross product. If each \(M_i\) is finite state, then the product must be finite state.

Similarly, if \(f_i\) is finite state for \(i=1…n\) and \(u_i(nulls) = nulls\) and \(u_i(s\mbox{ after }e) = u_i(s)\mbox{ after }h(e,f_1(u_1(s)), … f_n(u_n(s))\) and \(h\) has a finite range, then \(G(s) = g(f_1(u_1(s)), … f_n(u_n(s)))\) must be finite state as well. This second proposition can be proved correct by showing that \(SF[M_G] = G\) where \(M_G\) is the general automata product of \(M_1,…M_n\) for the Moore machines constructed via equivalence classes from \(f_1…f_n\). Since the state set of the general product is a subset of the state set of the cross product, the result follows immediately.

Permitting unbounded state sets doesn’t weaken the semantics here, although it is often important to show that a device can be represented by a finite state machine. It is often convenient to be able to specify system properties using state variables without worrying about finiteness. For example, in network protocols sequence numbers have to either roll over safely or the protocol has to terminate because we can’t send arbitrary unbounded integers in real network packets – often the bound is too big to worry about or rollover behavior can be added later. Transducers used to construct products are not finite state – although they fold into finite state products if properly defined as shown above.

Automata, deterministic state machines, have a precise model of state and state change and Moore type automata add powerful model of information hiding – the difference between state and visible state (output) that is so essential to large scale system design. Classical automata are not only faithful models of computer systems, but they have an actual deep mathematical semantic content. In particular, if you extend the Myhill equivalence to be a two sided congruence, you get a monoid not a state machine. That is, set s an d v equivalent under f (or y or whatever you want to call the sequence map) iff \(f(zsq ) = f(zvq)\) for all sequences z and q where juxtaposition indicates concatenation. Then the equivalence classes form a monoid under the operation of concatenation of representative elements. Hartmanis, Krohn & Rhodes etc. showed that the underlying monoids of state machines had a decomposition property generalizing the Jordan-Holder decomposition of group theory. This decomposition, under cascade products of state machines (wreath products of monoids) is interesting in itself but, of course, we often want to decompose (or maybe “factor” sounds better) state machines in ways that respects the architecture of the device or program where communication generally is not as simple as a cascade. Being able to represent these more complex products using primitive recursion makes it practical to use them to represent composite systems. In another note I show that the engineering structure of computing systems seen as a general product of state machines has all sorts of interesting properties having to do with modularity. In particular the notion of modularity as information hiding from Parnas turns out to have a precise meaning in terms of general product decomposition. It seems possible, for example, to explain why microkernel operating systems are a bad idea from such decompositions.

d v equivalent under f (or y or whatever you want to call the sequence map) iff \(f(zsq ) = f(zvq)\) for all sequences z and q where juxtaposition indicates concatenation. Then the equivalence classes form a monoid under the operation of concatenation of representative elements. Hartmanis, Krohn & Rhodes etc. showed that the underlying monoids of state machines had a decomposition property generalizing the Jordan-Holder decomposition of group theory. This decomposition, under cascade products of state machines (wreath products of monoids) is interesting in itself but, of course, we often want to decompose (or maybe “factor” sounds better) state machines in ways that respects the architecture of the device or program where communication generally is not as simple as a cascade. Being able to represent these more complex products using primitive recursion makes it practical to use them to represent composite systems. In another note I show that the engineering structure of computing systems seen as a general product of state machines has all sorts of interesting properties having to do with modularity. In particular the notion of modularity as information hiding from Parnas turns out to have a precise meaning in terms of general product decomposition. It seems possible, for example, to explain why microkernel operating systems are a bad idea from such decompositions.

Of course the classical automata are not used for large scale system design by humans because giant state sets are painful to work with and things like parameterization (e.g. a class of automata representing queues of some abstract object limited to some bound n) or real-time are clumsy, at best, and one tends to have to overspecify. Also products are awkward except at a very high level of abstraction. I hope to have improved things with this work.

Motivating examples

Couple of simple examples of both hardware and software.

Gates and latches

Think of the events seen by digital circuits as “samples” of the signals being applied to their input pins over some fixed interval of time. So the events for a circuit with n input pins can be represented by vectors e=(e1 , … en) where each element is either 1 or 0.

For what follows, I am going to steal a convention from C programming and consider boolean expressions used in a formula to have value 1 if true and 0 if false. So \((n+1)*(e_i = b)\) has value n+1 if the equality is true and has value 0 otherwise.

For \(b \in \{0,1\}\) let:

\[H(nulls,i,b) = 0, H(s\mbox{ after }e, i, b) = (H(s,i,b)+1)* (b= e_i)\]

So \(H(s,2,1) \) tells us how long pin 2 has been set to value 1 by counting events.

The state variable H doesn’t represent a device we could actually build, it is an unbounded state machine representing an abstract instrument measuring signals so we can specify how devices have to operate. In any concrete setting, we could make H finite state by specifying the largest time duration of interest. For example, if samples are at picosecond level, \(t> 10^{13}\) would usually not be interesting.

The simplest digital circuit is a wire with a propagation delay:

The simplest digital circuit is a wire with a propagation delay:

\[ W \mbox{ is a wire with propagation delay }t,\mbox{ if } H(s,1,b) \geq t \rightarrow W(s) = b\]

Suppose \(x\) is a wire with propagation delay \(t_1\) and \(y\) is a wire with propagation delay \(t_2\) and define \(W(s) = y(u(s)\) where \(u(nulls)= nulls\) and \(u(s\mbox{ after }e) = u(s)\mbox{ after } (x(s))\) for the vector \((x(s))\) with one element. Then \(W\) is a wire with propagation delay \(t_1+t_2+1\) where the additional 1 unit of delay is from the connection and could be removed by defining \(u( u(s\mbox{ after }e) = u(s)\mbox{ after } (x(s\mbox{ after }e))\) – whether or not that is a good idea depends on how delays are understood in the device.

A state variable g is a NAND gate with two input pins and delay t if and only if

- \( g(s) \in \{0,1\}, \mbox{ for all } s\)

- \(g(s) ≤ (( H(s,1,1) ≥ t) \mbox{ AND }(H(s,2,1) ≥ t))\rightarrow g(s)=0\)

- \((H(s,1,0) ≥ t) \mbox{ OR } (H(s,2,0) ≥t)) \rightarrow g(s)=1\)

An RS-Latch with delay \(t_l\) can be in one of 3 states: SET, RESET, and UNKNOWN with the following operation: \[RS(nulls,t) = \mbox{UNKNOWN}\] \[ RS(s\mbox{ after } a,t) =\begin{cases} \mbox{SET} &\mbox{if } H(s\mbox{ after }e,1,0) \geq t_l \mbox{ AND } H(s\mbox{ after }e,2,1) \geq t_l\\ \mbox{RESET} &\mbox{if } H(s\mbox{ after }e,1,1) \geq t_l \mbox{ AND } H(s\mbox{ after }e,2,0) \geq t_l\\ RS(s,t)&\mbox{if } RS(s,t)\in \{\mbox{SET},\mbox{RESET}\} \mbox{ AND } e = (1,1)\\ \mbox{UNKNOWN}&\mbox{otherwise}\end{cases}\]

Say \(L\) is an rs-latch with delay \(t\) if and only if \[ RS(s,t)= \mbox{RESET}\rightarrow L(s)=0 \] \[ RS(s,t)=\mbox{SET} \rightarrow L(s)=1 \]

Consider a circuit composed by cross connecting two “nand gates”. The composite system has the same set of events but each gate embedded in the system gets one signal from the event and one from the feedback from the other gate. Suppose \(g_1\) and g2 are NAND gates with delay t and define \(u_i(nulls)=nulls,\mbox{ for }i \in \{1,2\}\) and \[u_1(s\mbox{ after }e) = u_1(s)\mbox{ after }(g_2(u_2(s)),e_1)\] \[u_2(s\mbox{ after }e) = u_2(s)\mbox{ after }(g_1(u_1(s)),e_2)\] )

Then r(s) = (g_1(u_1(s)),g_2(u_2(s)))\) has the amazing property that \(r\) is a \(3t+3\) rs-latch. Note that \(H(s,1,b) = H(u_1,i,b)\) (proof by induction on \(s\)). Consider that if \(H(s,1,0) \geq t+n\) then \(H(u_2(s),1,1) ≥ n\). First prove it for \(n=0\) by induction on \(s\). If \(s=nulls\) then there is nothing to show since \(u_1(nulls)=null\) and so \(H(u_1(nulls),1,0) = 0 \). Suppose \(H(s\mbox{ after }e,1,0) > t\) then \(H(s,1,0) \geq t)\) and thus \(H(u_1,1,0)\geq t\) so, by Nand properties, \(g_1(u_1) = 1\) which implies \(H(u_2(s\mbox{ after } e,1,1) \geq 1\). By the same reasoning, \(H(s,1,0) > 2t\) and \(H(s,2,1) > t\) implies that \(g_2(u_2(s)) = 0\). etc.

Distributed Consensus

Suppose we want to define a network of devices communicating by message. We should have a set \(M\) of messages, a set \(I = \{1,\dots k\}\) of network device identifiers.

Suppose we want to define a network of devices communicating by message. We should have a set \(M\) of messages, a set \(I = \{1,\dots k\}\) of network device identifiers.

The only observable output of a network device is the message, if any, it is attempting to send. When the device is not attempting to send a message, fix its output tp be some \(nullm\not\in M\).

\[\mbox{A state variable }d \mbox{ is a network device on }M\mbox{ and } I \mbox{ only if } d(s)\in M\cup\{nullm\}\]

Events should include \(M\) (with event \(m\) meaning the device receives message \(m\) and events \(tx\) (the device transmits its output message), and \(tick\) for the passage of one unit of time. Of course \(tick \not\in M\) and \( tx \not\in M\). Then define \(R\) and \(S\) to count how many times the device has Received or Sent a message. \(R\) is easy

\[ (R(nulls,m) = 0, \quad R(s\mbox{ after }e),m) = (e=m)+R(s,m)\]

To count how many times a message has been sent we need to monitor the device.

\[\mbox{ For a network device }d,\quad S(nulls,m,d) = 0\quad S(s\mbox{ after }e,m,d) = (d(s)=m)(e = tx))+S(s,m,d)\quad (A)\]

Suppose there is a subset of \(M\) of “request messages”, \(REQ\subset M\) and a map \(\rho: REQ\times I\to M\) and let

\[\rho(m,i)\in M, \quad \rho(m,i) = \rho(m’,j) \rightarrow ( (i=j) \mbox{ and } (m=m’) \]

be the response message that device with id \(i\) can use to respond to request \(m\). If a device \(d\) has id \(i\) then \(d\) can only send a response message that responds to a request it has received or a response message it has previously received (so it can retransmit valid response messages ).

\[\mbox{If }d\mbox{ has id }i, \mbox{ then } d(s) = \rho(m,j) \rightarrow ( (j=i\mbox{ AND }R(s,m)>0) \mbox{ OR } R(s,\rho(m,j) ) > 0 )\quad (B)\]

Now we can define a network system \(N(s) = (d_1(u_1(s)), d_n(u_n(s))\) where each \(d_i \) satisfies properties A and B for id \(i\). The \(u_i\) determine the interconnection. Keep it simple so each \(u_i\) can only increase by one event or no events each network event.

\[ u_i(nulls) = nulls\quad u_i(s\mbox{ after }e) \in \{u_i(s)\mbox{ after }e’\mbox{ for }e’\mbox{ in the device alphabet}, u_i(s)\]

and no message can be delivered unless it was sent.

\begin{equation} u_i(s\mbox{ after }e = u_i(s)\mbox{ after }m \rightarrow \mbox{ for some }j\in I, S(u_j(s),m) > 0\quad (C)\end{equation}

If follows immediately that \(R(u_i(s),m) \rightarrow \mbox{ for some }j, S(u_j(s),m) > 0 \). This can be proved by induction on \(s\). We know that \(u_i(nulls) = nulls\) and \(R(nulls,m)=0\) so \(R(u_i(nulls),m)=0\). Suppose the property holds for \(s\) and \(R(u_i(s\mbox{ after }e,m) >0\) . If \(R(u_i(s),m) > 0\) then it must be, by the induction hypothesis that for some \(j\), \(S(u_j(s),m))>0\) and so, by the definition of \(S\) and \(u_j\) that \(S(u_j(s\mbox{ after } e,m) > 0\). Suppose the other case, that \(R(u_i(s),m) =0\). Our hypothesis is that \(R(u_i(s\mbox{ after }e)>0\) so it must be that \(u_i(s) \neq u_i(s\mbox{ after }e)\) so by definition of \(R\), \(u_i(s\mbox{ after }e)= u_i(s)\mbox{ after } m\) which means that \(S(u_j(s),m) >0\) for some \(j\).

Here is a basic safety property essential to distributed consensus

\[R(u_i(s),\rho(m,j)) \rightarrow R(u_j,m)\]

it follows that if \(R(u_i(s),\rho(m,j))>0\) for a majority of elements \(j\in I\) then a majority have received \(m\) and acknowledged that receipt to device \(i\). A detailed description of a real distributed consensus or atomic broadcast protocol almost certainly needs a mod counter or two. So we might define some \(w\) so that \(C(w_i(u_i(s)))\) is the result of embedding the counter defined in the first section in a device.

There is no guarantee in this network (or in real networks) that any messages ever get delivered. Since messages get delivered, in practice, we can validate algorithms by e.g adding the assumption that messages always get delivered or that they always get delivered if sent at least \(c\) times (more realistic) or even by adding a probability constraint. A simple one would be that if a message has been sent at least \(c\) times and at least \(t\) ticks have passed since it was first sent, there is some \(p\) so that with probability \(p\) the message has been received.

Structured and jello semantics and “formal methods”

The early versions of this work, included the basic insights on primitive recursion, state driven by events, and general products but were handicapped by the incorporation of a “formal methods” approach which has been very slowly discarded over time. The primary work drawn on here is classical automata theory, e.g. Arbib’s survey and Hopcroft/Ulman’s classic text, primitive recursive function theory – Peter’s books [R, R2] in particular, and Gecseg’s monograph on the general product. All of this can be used within ordinary (informal) mathematics and is only made harder to use and understand if it is entangled in metamathematical machinery, but it took some time for me to fully grasp the problem. The formal methods used earlier came from temporal logic which has compellingly intuitive formulations of interesting properties in operating and distributed systems – e.g. that some property will eventually become true or always be true or become true in the next state. Unfortunately, as many people discovered, past the initial enthusiasm, temporal logic does not scale because the “semantics” are unstructured and the formal logic basis forces the user to spend a lot of time redefining elementary mathematics for no good reason.

For the network defined above if we wanted to specify the circuits that detected transmit events down at the logic element level we could, for define say \(w\) to produce circuit component level events and write, e.g. \(g(w(u_i(s))))\) to see what the state of a nand-gate would be in the state of device \(i\) in the network state determined by \(s\) . This is meaningful because the state machine “semantics” has a product structure. In contrast the “Kripke semantics” of temporal logics is an artificial flat construct that can’t carry the weight of something like “the circuit is a component of the network device which is a component of the network.” The “semantics” is technically adequate but is not so much a structure as a blob.

Releyéndolo, duscribimos bajo su rigurosa escritatura una fundamental vaguedad – Borges

For standard temporal logic semantics, aside from the most general properties – e.g. that the object is a directed unlabeled graph (or a trace of such a graph) and the states are assignment maps on formal variable identifiers – all properties of the semantics must be spelled out in the formal language. Even things as simple as conjunction and negation must be specified as in this equation from Lamport’s TLA

There is nothing intrinsic to the semantic objects that requires a state to map the formal symbol \(\neg F\) to the negation of the value assigned to \(F\). So the unfortunate user of the method has to go back over the grounds of elementary sets, functions, and relations, as well as the basic properties of mathematical reasoning. That’s exactly the intention for metamathematical studies of the foundation of mathematical reasoning, but it’s not clear what foundations of mathematics have to do with understanding how distributed consensus works.

The successor relations between states also has to be defined explicitly.

Generally some time is supposed to pass on each transition to the “next state”, but how that passage of time modifies assignment maps has to be spelled out in the specification. The assignment maps are arbitrary and the relationship between assignment maps in successor states is arbitrary. If state \(\sigma\) is a successor to state \(\sigma’\) there is nothing that this relationship implies about differences or similarities between the two assignment maps.What does “eventually” mean in this context? And then what happens if we compose components – does the time interval sum?

The basic problem is that the underlying semantics is unstructured and the specifications must be written in formal logic which unnecessary and clumsy for working or engineering mathematics ( Von Neumann called it “refractory”) . Both of these issues are present in “formal methods” in general and they come together. Formal logic is powerful enough to prop up the jello semantics, but it can’t take it very far. Some authors have tried to extend or replace automata by e.g. adding clocks (Timed and Hybrid Automata – Alur and Dill) or or communications (under the mistaken impression that plain old state machines can’t represent communication). Classical automata (even with the finiteness condition made optional – which was a well known idea in classical automata theory) are fundamental mathematical objects, with underlying monoid structure – they are mathematical objects with strong semantics and intrinsic structure. But hybrid automata or Milner transition systems exist only by axiom and they have no intrinsic structure. You can’t factor a Milner transition system, you can only try to show properties by working within its axiomatic specification. Essentially, those objects do not possess a natural factorization, so modeling multiple levels of connected components is difficult.